Traditional grades are not objective

A mathematician’s thoughts about numbers

A recent article about ungrading did a nice job of interviewing proponents of alternative grading – including my coauthor Robert – and discussing the philosophy behind it. But it also included some head-scratching quotes from an alternative grading skeptic. The author seems to have brought in the skeptic to provide “balance” to the article, but then accepted the skeptic’s claims without question.

Key among those claims is the idea that traditional grades – points, partial credit, and percentages – are objective, and that by changing or getting rid of traditional grades, we’ve done away with objectivity.

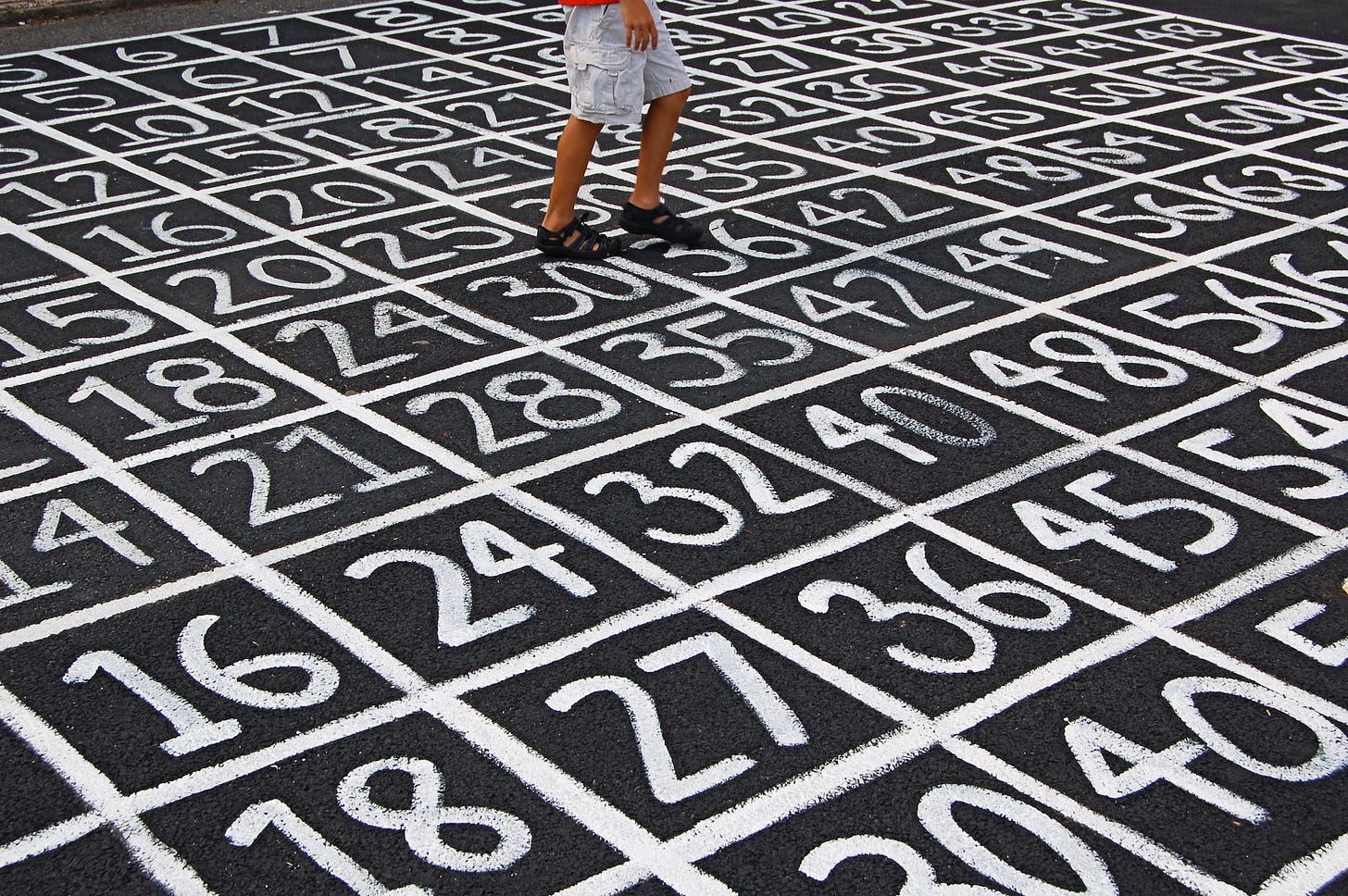

It’s a bit unclear what the skeptic actually means by “objective”. Luckily, as a mathematician, this is an issue that I’ve faced often. So today, let’s dig into the many ways that traditional grades are anything but “objective”.

By “traditional grades”, I mean the familiar system in which students earn points or percentages (including partial credit) on individual assignments. Those points are put through some sort of weighted-average formula (e.g. “60% exams, 30% homework, 10% attendance”) and then translated into a letter grade.

Traditional grades don’t represent student learning

One possibility is that our skeptic means that traditional grades give a clear, unambiguous, objective view of what a student knows or has learned in a course.

Let’s think about this through an example. This example is one that’s often used to illustrate problems with traditional grading, and we’ve used it before, so please bear with me if this looks familiar.

Suppose Alice and Bob take a class where the only assessments are three 100-point exams. Alice’s grades on these are 0, 80, and 100, while Bob’s grades are 60, 60, and 60. Both students earned 180 points out of 300, or an average of 60% – borderline between a D and F in most marking systems.

Take a look at those nice, objective numbers: 180 points, a 60% average. Clear and unambiguous, right?

Wait… what does that 60% average mean? Although both students have the same average, their trajectories were wildly different, and that hints at major differences in learning. It appears that Alice grew in her understanding and eventually aced the third exam. It seems likely – although we can’t be sure – that by the end of the semester, Alice fully understood many of the topics in the class. Bob bumped along, showing no particular progress at all.

What does Bob’s 60% mean? Does he understand 60% of the class topics thoroughly, and have no understanding at all of the other 40%? Does he have a moderate understanding of everything, but doesn’t fully understand anything? Were these exams cumulative, and he understood the same 60% each time? Does Bob have a great understanding, but suffers from extreme test anxiety? The grade average can’t tell us.

Even Alice’s individual grades don’t tell the full story. Why did Alice earn a 0 on the first exam? Did she not understand anything? Did she miss the exam entirely because she slept in? A “zero” has no inherent meaning; it’s silent on what Alice actually knows.

So while these numbers look objective, they aren’t. Points, partial credit, and percentages appear to give us clear and unambiguous information about what a student has learned, and that appearance is something that we’re all very used to. But the appearance doesn’t survive even a surface-level examination. Averaging produces a mash-up of partial credit, partial understanding, the student’s environment, and any number of other things.

The main benefit of using traditional grades is that they’re familiar, and that familiarity lulls us into trusting the numbers. The formula produces the same number, so we assign Alice and Bob the same (failing) grade, and it feels “objective”.

Grades can have meaning, and can show parents and students “what they’re getting” from a class. To do that, we need to link student learning to clear standards that describe what matters in our classes. Once we’re using clear standards, there’s no need to grade them with traditional methods at all: It’s both easier and clearer to report a progress indicator like “Exceeds expectations” or “Not yet” rather than hoping that others can bring some sort of useful interpretation to a muddy, ill-defined number.

Traditional grades are determined by humans

Perhaps our skeptic means that grades are “objective” because they are numbers, and numbers are, well, inherently objective, right? They’re numbers, how can they be subjective?

Easy: Humans determine the numbers.

Let’s think back to Alice and Bob. Perhaps exams are 70% of the final grade, but participation is 30% and is determined by completing some clicker questions during class. Both students have exactly the same exam average that we saw earlier, but their participation grades are rather different:

Suddenly, Alice is clearly failing, and Bob is doing OK – earning a C- or maybe even a C. What happened? Perhaps Alice arrived five minutes late every day (missing the “attendance” clicker question) because she had to drop off her kids at daycare, but then took excellent notes and engaged fully afterwards. Maybe Bob sat in the back, pushed buttons randomly, and played around on social media but got credit for being there. Maybe I just made that up: We can’t tell from the numbers.

Even with exactly the same performance on exams, Alice and Bob are now nowhere near each other. Nobody would look at their final grades and think that they had comparable performance.

The real difference is that somebody – an instructor, a human being – made a decision about how to measure “participation” and how to average that number into the final grade. Even though Alice and Bob’s participation grades are “objectively” measured by clicker questions, a human decided to measure participation that way, and a human decided that this number would be worth 30% of their final grade. These human decisions can make huge differences, and the example above illustrates how those differences can have little or nothing to do with what Alice and Bob actually know.

This arbitrariness extends throughout traditional grades. Humans decide what counts and doesn’t count towards a final grade. Humans decide what points are granted for a student’s work. Humans decide how to average, weight, or otherwise combine those points into an overall number. And finally, humans determine the cutoffs for turning points or percentages into letter grades.

Everything I listed above involves decisions that are made by humans, and so traditional grades are inherently based on the results of our human assumptions, guesses, mistakes, and biases.1

Let’s look at that last factor – determining numerical cutoffs for letter grades – a little closer. The difference between 79.9% and 80.1% is the difference between a “C” and a “B” in most grading scales. Is that 0.2% difference really meaningful in terms of learning, or anything else? If one instructor sets an “A” at 90% or above, but another begins the “A” range at 93%, does that represent a meaningful difference?

Grade cutoffs are arbitrary values determined by humans, a mash-up of tradition and attempts at standardization that have happened piecemeal over the course of decades (the history of our current “traditional” grading scales is a topic for a whole other post).

Thinking that a grade is “objective” because it comes out of a formula requires us to ignore where the formula and all of its inputs came from. The numbers that humans assign are based on human decisions, human-created rubrics, and human priorities. Putting them into a human-created formula doesn’t remove the fact that humans made them in the first place.

This isn’t to say that human decisions are inherently bad, or even that subjectivity is necessarily bad. Every day, educators use their very real expertise to design classes, teach students, create assessments, and interpret the results. These are fundamentally subjective processes. But they can also be valuable, human processes that focus on the real people that we’re working with, processes that acknowledge how humans learn and designing meaningful experiences around those. But putting objective-looking numbers on those processes can hide the subjective nature of the decisions involved.

Traditional grades are “objectivity theater”

The issues I’ve described above show that traditional grades merely masquerade as “objective”. Traditional grading gets its veneer of objectivity from using math, but numbers and formulas are only as objective as the people who create them. This is a problem that goes far beyond grading, and has huge implications for the modern world (seriously, click that link and check out the book). The name for this “veneer of objectivity” that hides deeper, and quite complex, issues of student learning is “objectivity theater”.

A few months ago, Robert wrote in great detail about why treating points and partial credit like numerical data is a bad idea. I won’t rehash his whole blog post here, but in short: The entire idea of averaging grades doesn’t make sense when applied to certain kinds of numbers (like ZIP codes), and point-based grades fall squarely into that category. The very way we process and reduce traditional points-based grades into a final grade is itself flawed, but it hides those flaws behind the thin veneer of objectivity provided by “math”.

Finally, a word of caution. To some extent, focusing on the objectivity – or lack thereof – of traditional grades is a red herring. If we focus entirely on finding a perfectly objective way to calculate a student’s grade, we’re ignoring what we’re assessing and how we’re assessing it. If we spend all of our energy seeking the most perfectly objective way to calculate grades, we miss out on the need for feedback loops, for reassessments, for valuing the many ways that students can show us what they know, and for understanding the effects that grades have on students in the first place.

Alternative grading offers, well, an alternative. Not just in getting rid of the fake objectivity of numbers or removing arbitrary formulas, but in recognizing that we can do better by focusing our assessments on supporting how humans really learn.

Don’t be hoodwinked by the supposed “objectivity” of math. Take it from me, I’m a mathematician.

Don’t forget that student evaluations of teaching are typically number-based, too.