Six ways to use artificial intelligence tools in alternative grading

Three for faculty, three for students

Teaching and learning are two of the most distinctly human activities that a person can engage in. With alternative grading practices, what we really seek is to place the humanity of both instructors and learners at the center of education. Persistent engagement with feedback loops is not only the primary, but I believe the only way that humans learn anything of significance. By engaging in those loops we become more human ourselves, and to build a class around that engagement is both an opportunity and a privilege for us instructors.

But it can also be very hard work, and we need all the help we can get – not only from other humans (which is why you’re here at this blog, hopefully) but also from technology. Many of us already lean quite heavily on technology to help in our grading practice, and whenever there is a chance that some new technology might help us do the human work of teaching better, it’s worth a look.

I believe that generative artificial intelligence tools fall into that category of powerful technologies that can help and are therefore worth a look. And in this post, I’m going to share some ways where generative AI can be a productive partner in our work with alternative grading.

Where I stand on generative AI

I know the readership of this blog pretty well and I know that some of you have very strong negative opinions about generative AI in education. I would ask that you hear me out.

I am a “techno-pragmatist” with respect to AI. I neither mindlessly embrace it nor mindlessly resist it, but am convinced that it has the potential to be a powerful tool for helping humans do uniquely human things, when used with appropriate care. It is an epoch-defining technology that demands my attention as both a teacher and a scholar. I am committed to engaging with AI in that role: as a teacher-scholar, with an open and active mind, grounded in larger contexts and truths, and coming to my own conclusions based on data and, importantly, my experiences.

I cannot in good faith accept or reject something I don’t understand, and I can’t understand something I haven’t experienced. So for the last several months, I’ve been setting aside 30-60 minutes each working day to engage with AI tools in some way: learning how different ones work, using them to do simple tasks, using them to do real work, pushing their boundaries, thinking about the results. A lot of what’s in this post is an after-action report of those experiences.

I am aware that there are concerns about AI: its impact on human cognition, its impact on the environment, and more. I’ve looked into these as part of my AI engagement strategy and honestly, for me the jury is out on all those concerns. I’ve found no concern or issue that categorically leads me to stop exploring AI1. I’ll keep my mind open to changing my approach in the future as I learn more.

I’m happy to go into more depth and hear your responses to all that, in the comments.

But for now, here are three ways that AI can be useful for faculty doing alternative grading, and three ways it can be useful for students in alt-graded courses.

Faculty use #1: Making Clearly Defined Standards

In our usual visual about the Four Pillars of Alternative Grading, “clearly defined standards” comes first if you read it from left to right. It’s also first in the sense that writing standards is typically the first thing you do when building a course, and also first in the sense of being, in many ways, the most important of the pillars. It’s hard to implement any of the other three pillars if you don’t have clearly defined learning outcomes.

Writing a good set of standards seems to require threading three different needles: Your standards must be simple and easy for students to understand, they must capture all the important ideas of the course, and there can’t be too many of them. Getting two out of those three to work doesn’t seem so bad, but getting all three can be devilishly hard. This is where AI can help.

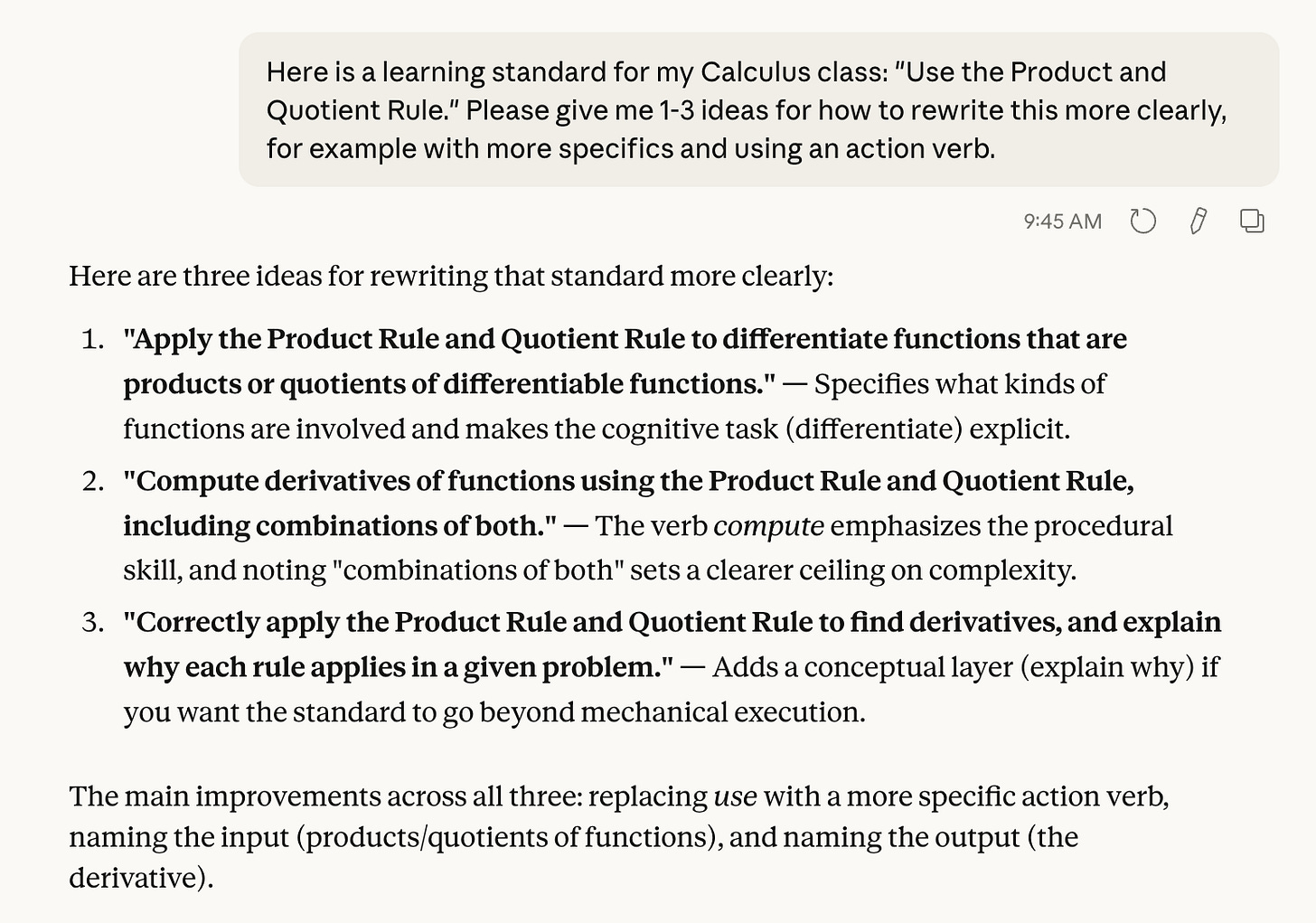

If you have a standard that seems important and you want to assess it and it belongs in your list, but you’re unsure about its clarity, an AI can help you clarify it, like below2:

Note how the prompt was phrased: “Please give me ideas”. We’re not asking the AI to write the standards for us, in order to use the results without thinking about them. That’s the very thing we should be avoiding. Instead, we’re just asking for help. As the instructor, you can take or leave the results, or make a remix that uses the best parts of each.

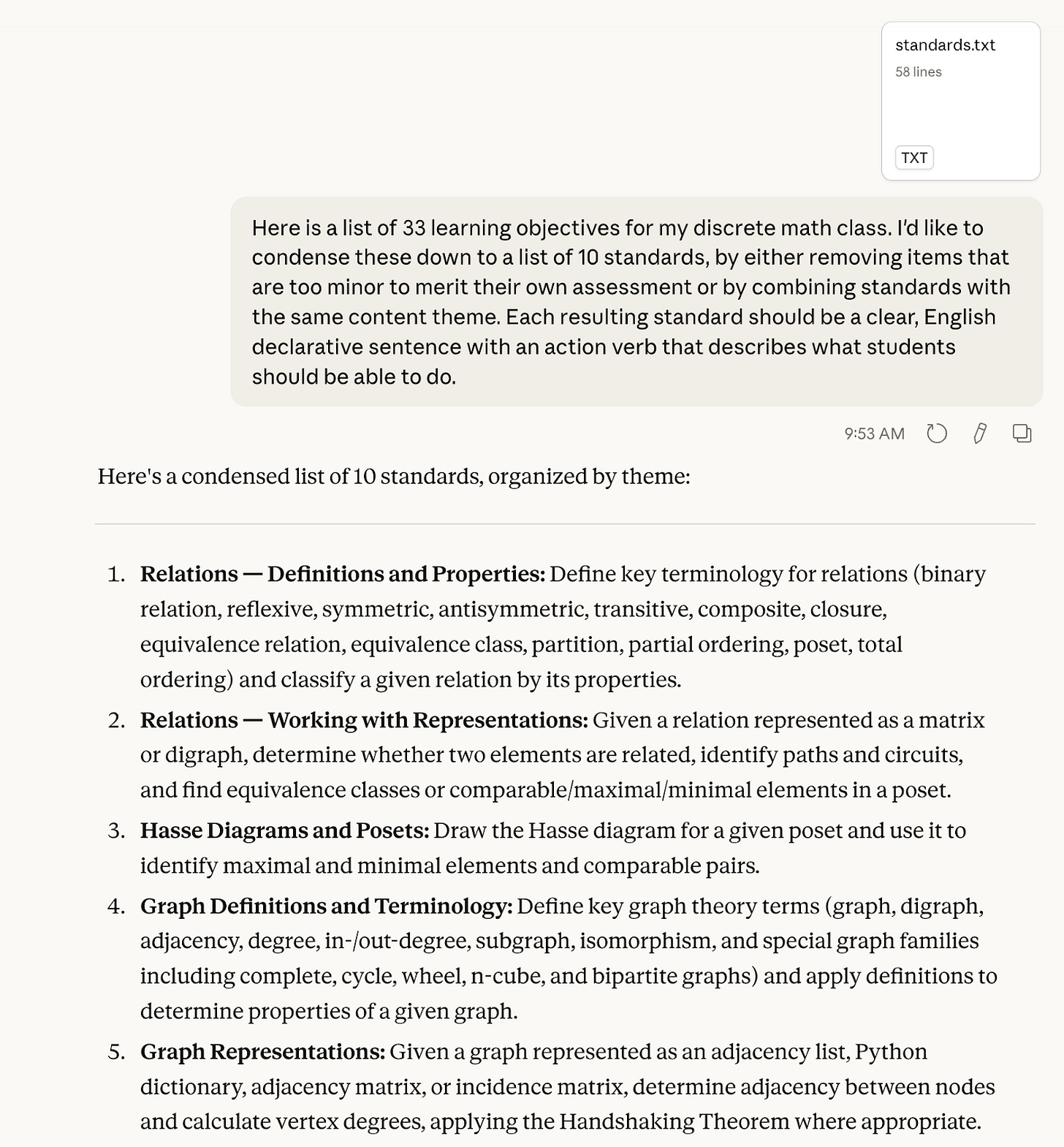

If you have a bunch of standards that all seem good, but there are too many of them, you can ask AI to cut or consolidate them for you.

The original list I gave Claude is here; the list of standards that Claude generated continues past the bottom of the screenshot. I don’t think I would use verbatim what Claude generated, mainly because this seems to consolidate quite a lot of concepts under a single standard, to the point of being double- or triple- or many-barrelled. (Maybe asking for 16 instead of 10 would help spread them out a bit.) But it would certainly get me thinking concretely about thematically grouping standards into assessable units.

Fun fact about this example: Long-time readers will know that my first attempt at specifications grading in 2015 involved having 68 learning standards for my course. The 33 standards that are in the file that I gave Claude were part of that list. So had an AI tool like this been available for me in 2015, I might not have been in grading jail for the entire semester attempting to manage 68 sets of assessments every week3.

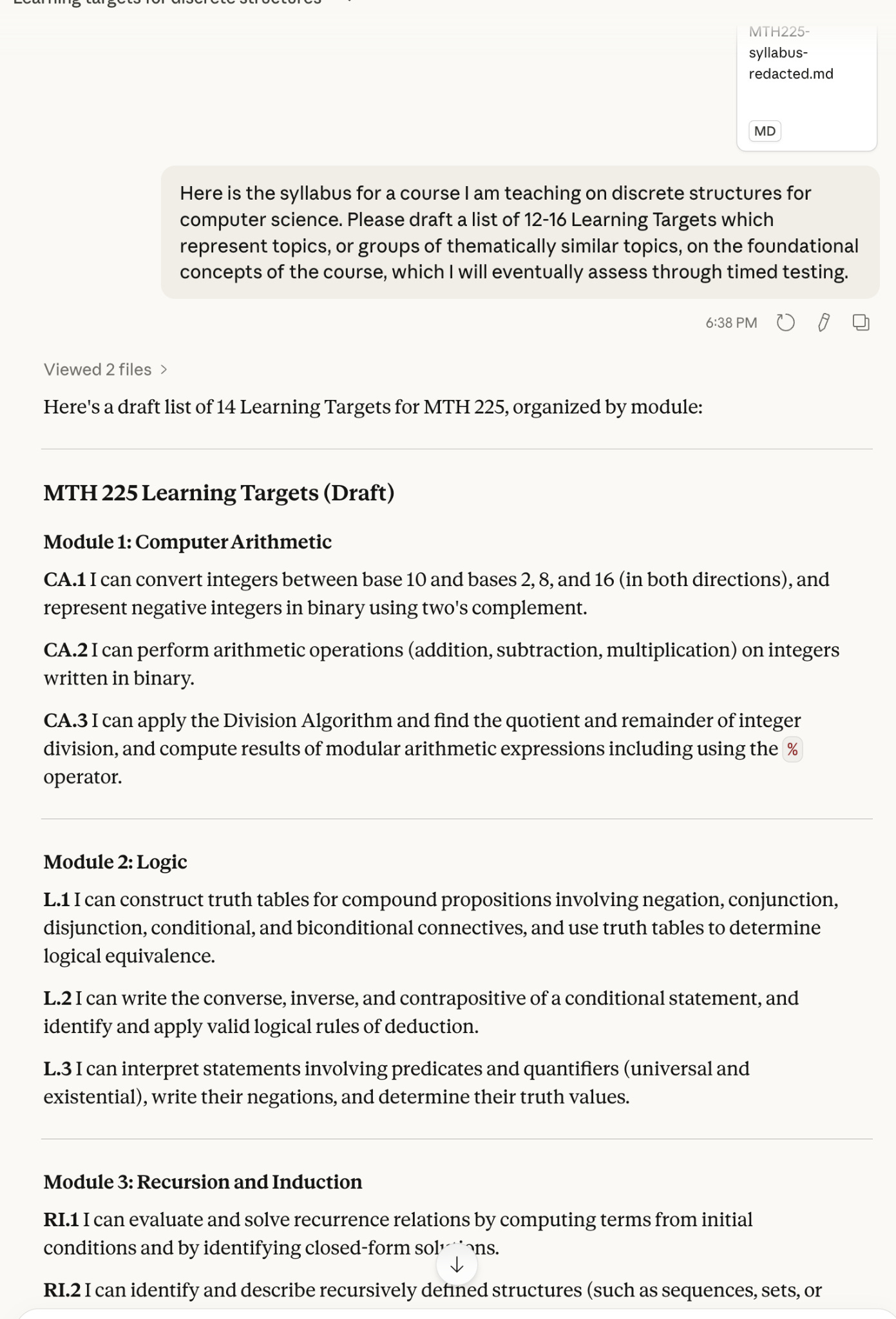

Or if you just have no idea where to start with your standards, you can feed an AI tool your course materials and any other relevant information and ask it to come up with a list itself. As an exercise, I took the syllabus for one of the courses I am currently teaching and removed all references to learning targets from it, gave the syllabus (which contained the course description) to Claude, and asked it to create Learning Targets for the course. It produced a list of 14 of these, which mostly coincided with the learning targets I had made myself, which appear in the appendix to the syllabus4.

There was so much overlap in my human-generated Learning Targets and these, that I would feel comfortable in the future using Claude to double-check my work in a new course where I needed to write standards from scratch.

Faculty use #2: Generating reattempts

While having clearly defined standards is the first and perhaps the most important pillar, the real heart of alternative grading is the fourth pillar, reattempts without penalty. This is also one of the hardest to implement because of the time factor involved, not only for grading reattempts, but for making up the reattempts. Here too, AI can be extremely useful.

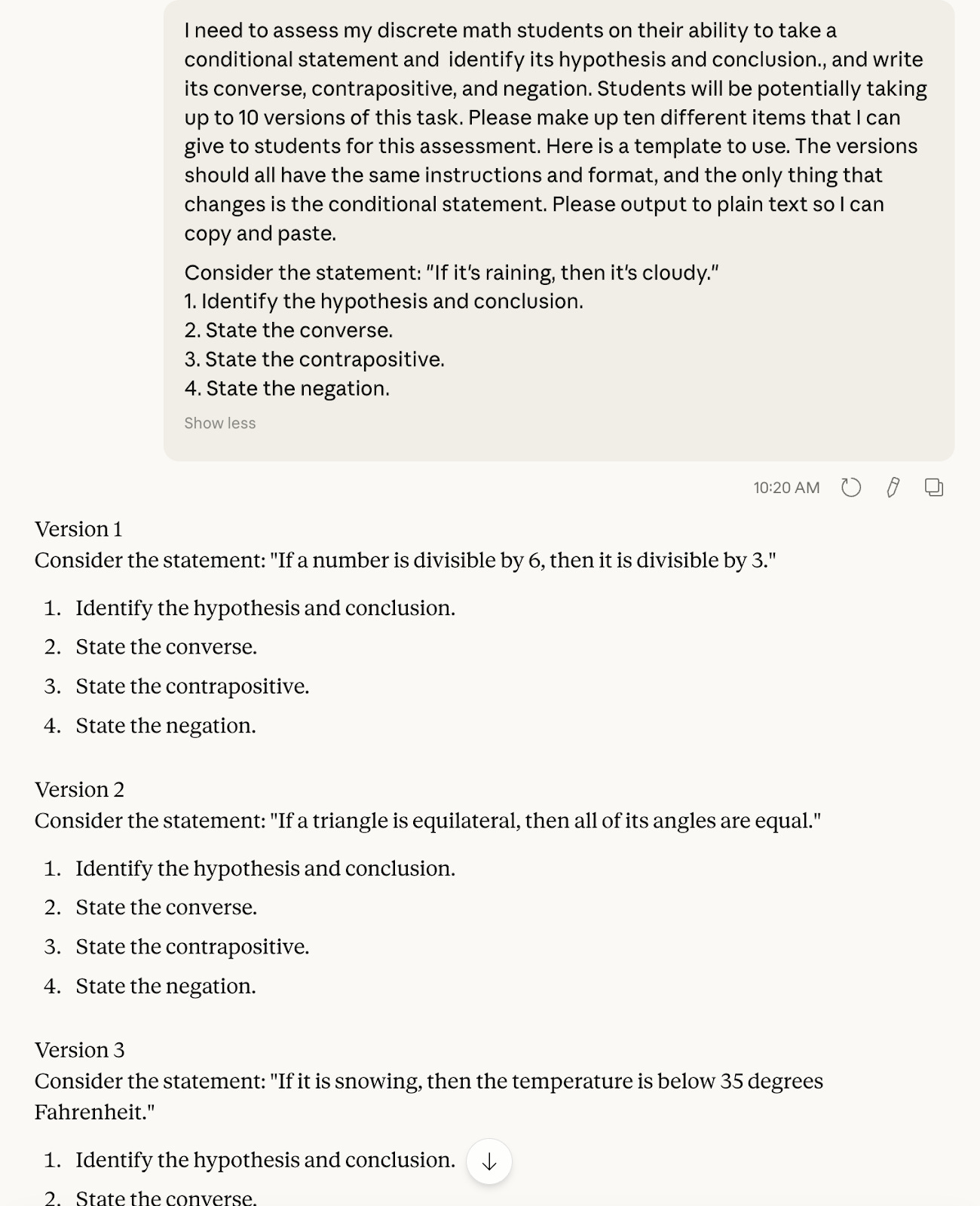

I avoid using AI for creative work, including making teaching materials like flipped-classroom video content or small group activities for class. But I have no problem doing something like the following, which is anything but creative:

And just like that, I’ve drafted the entire semester’s worth of assessments on this particular learning target in about the same amount of time it took me to write the prompt. Again, however, you as the instructor need to remain in the loop and not just accept the LLM’s output. Here for example, I might want to vary the phrasing of the conditional statements so it’s not always “If A, then B” (but perhaps “B if A”, or “A is sufficient for B”, etc.).

Faculty use #3: Making exemplars

When asking students to do higher level work, it’s good to have examples of work that meets the standard to certain degrees and also fails to meet the standard for different reasons. This is really is creative work on the part of professors, so much so that it can be hard to create something that really hits the mark with students. This is a good use case for an AI tool, at least for giving an assist:

The example from Claude goes on to explain why the first one is well written, but the second one is poorly written. Having seen one decent exemplar5, it’s easier for me as a human instructor to make more.

You could follow this up in class with an activity where students grade these two responses using your standards. Or alternatively, you can instruct students on how to do this for themselves, which gets us into our next group of three use cases.

Student use #1: Deliberate practice

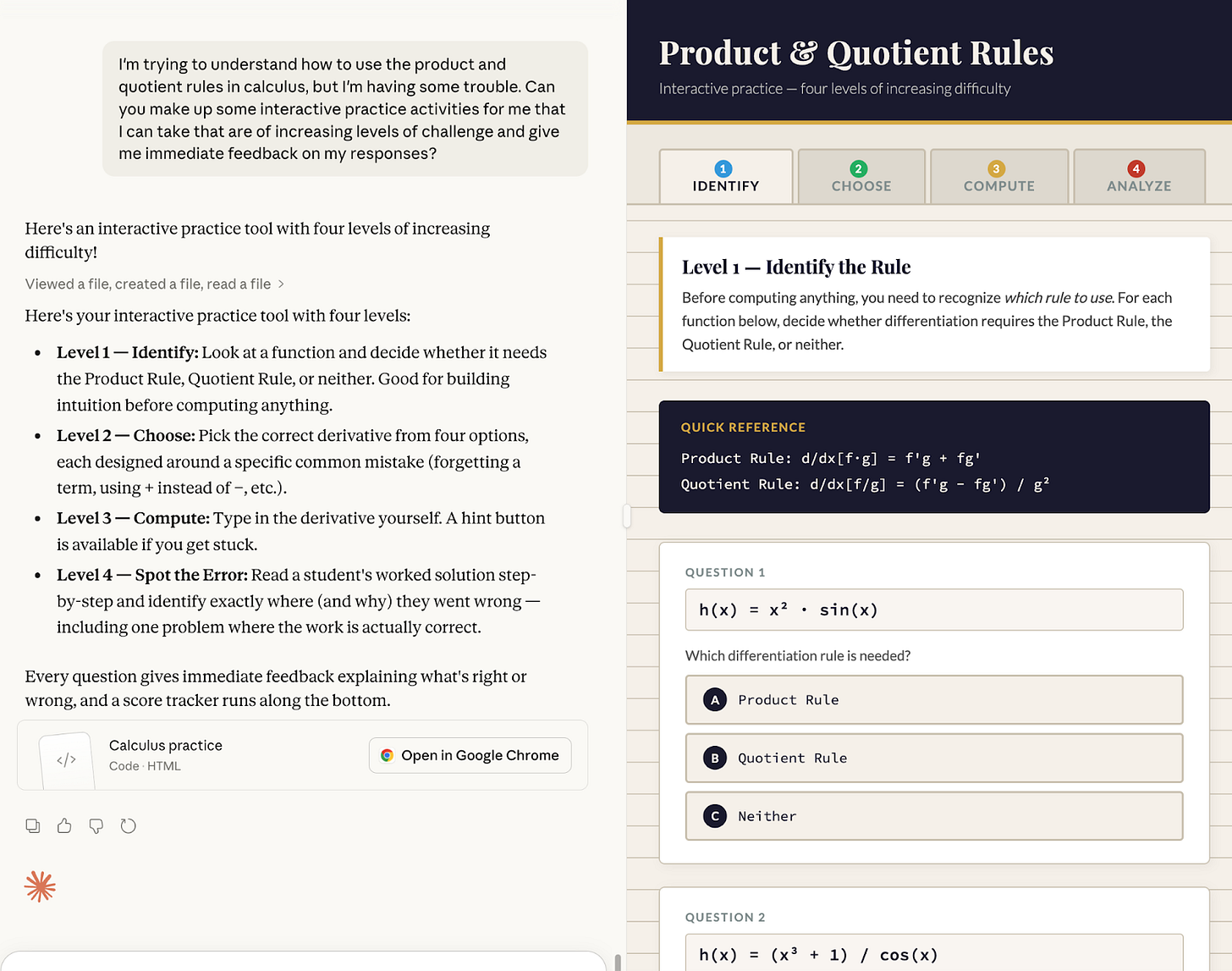

I’ve written here a lot lately about the role of deliberate practice in alternative grading. In those posts I noted some defining characteristics of this kind of practice which include an intentional design to improve performance, the ability to be repeated indefinitely, and continuous availability. When I was a student, I never heard the word “practice” applied to learning math – that was something I did for music class, not math. Perhaps that’s because, unlike music classes where time-tested exercises and practice regimens were and still are common6, the means of practice in a math class were limited to the small number and limited nature of exercises in my textbooks. But students today can do things like this:

This is a fully-realized web app that can be run in a browser, that not only has the correct math in it but also does a really nice job pedagogically of scaffolding the complexity of the tasks.7

AI makes the availability of useful practice tools less of an issue. What is still an issue is inculcating the mindset of practicing in students and creating a culture of deliberate practice in our classes. For example, a student who has never had a discussion with their prof about deliberate practice might not think to add the bits about “increasing level of challenge” and “immediate feedback” to the prompt. And that is where you and I, as the human instructors, come in — to initiate and sustain discussions about what kinds of practice are truly useful.

Student use #2: Decoding feedback

In our description of feedback loops here at the blog, they start with a learner attempting a task, and then next they receive feedback from a trusted third party. But then there’s an important third step: making sense of the feedback. We try to give helpful feedback, but despite our best efforts, students sometimes may not speak the language (perhaps literally) and will need help in decoding what we tell them.

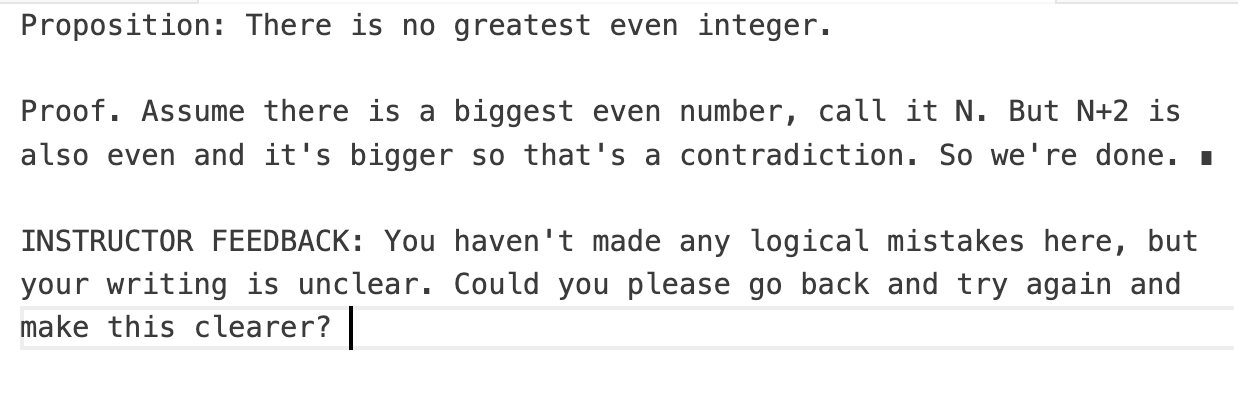

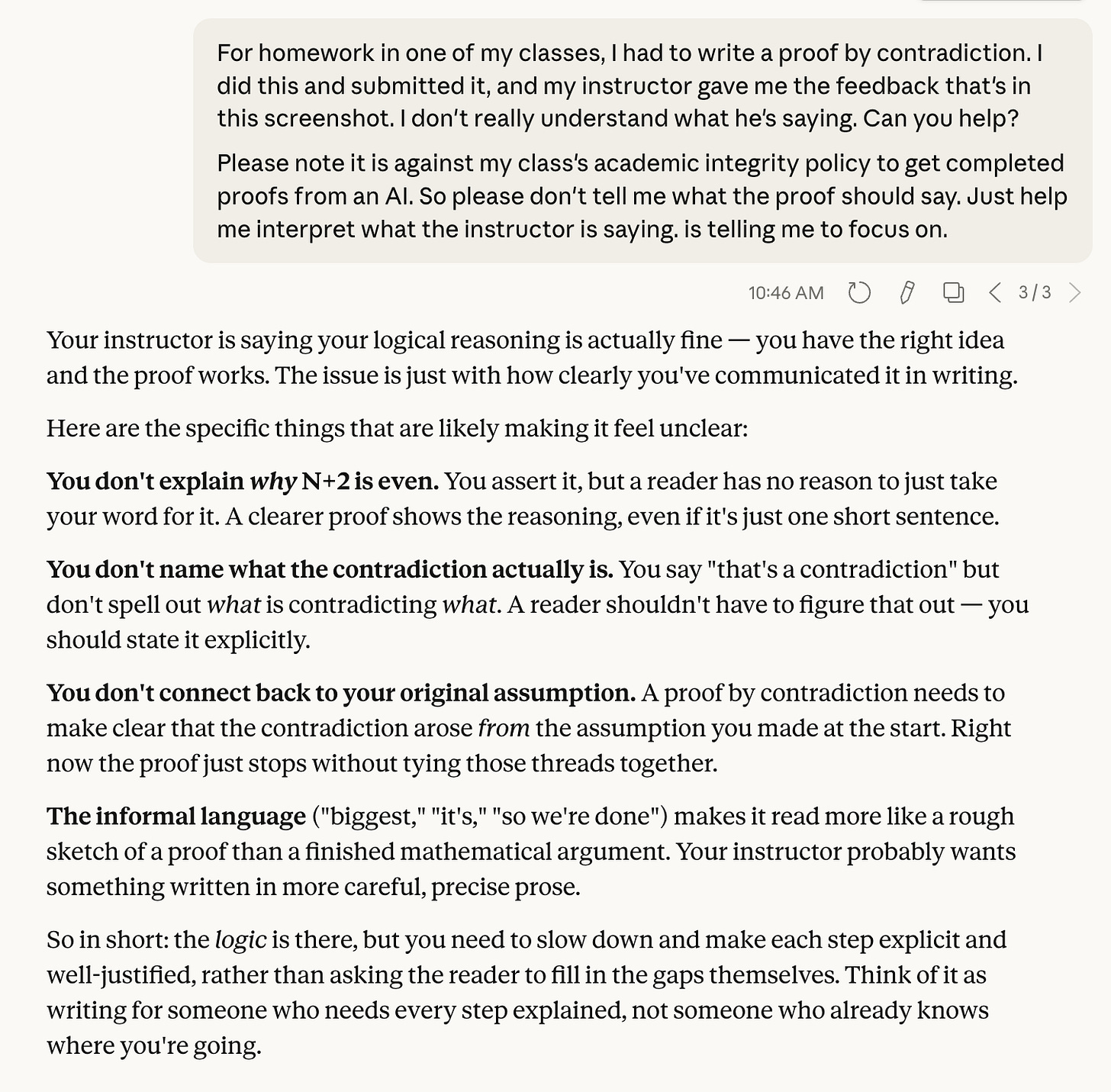

In this example, I’m pretending to be the student who wrote the poorly written contradiction proof from earlier, and I’ve made up some instructor feedback that is maybe trying to be helpful, but it’s not especially clear.

Here I am, acting as the student asking Claude for help in interpreting the feedback:

Now, there is a big caveat with this use case, and you can see it in the final paragraph of the prompt. In the original version of this example, I didn’t have that paragraph, and Claude produced a completely corrected proof, which would then be very easy for me to copy and paste. If the student doesn’t explicitly restrict the LLM to just the part of the feedback loop about interpreting what the feedback says, the default behavior of the tool will likely be to do the rest of the work for the student.

Obviously, this is not what we want, and its heightened likelihood, thanks not only to AI but the internet in general8, is a serious problem. But I believe we’re not going to solve the problem by banning AI technology from classes — as if any such ban would be more than a performative statement in our syllabi. A better solution is to communicate with students about the right and wrong ways to complete their work and the role of reattempts without penalty in doing so honestly. That communication should include, but should definitely not be limited to, sample boilerplate about academic integrity, like mine above, that a students can add to AI queries This may not eliminate cheating, but it will at least lessen its value proposition.

Student use #3: Scaffolding metacognition

In an ungrading/collaborative grading situation, students are tasked with assembling a portfolio of their work throughout the semester and then using it to make a case for themselves at the end of the course for the grade they should receive. It’s been well documented by instructors using collaborative grading, including many of our guest authors, that the part about making a case for oneself is easier for some students than others. Many students can struggle to pull together a narrative that accurately explains the arc of their learning journey and puts themselves in a positive light, even if the portfolio of work is consistently excellent.

Here is where AI can be very helpful to these students. I don’t have an example of this to share, but hypothetically this is how it could work: A student can take the items for their portfolio as individual files and put them into a folder and then upload them to an AI, along with the syllabus and standards for the course, and ask the AI to help them tell their own story. For example, “I believe I have met the standards for a grade of B in this course, but I’m having trouble creating a brief narrative explaining why. Can you give me ideas for how to talk about this with my professor?” Students should not simply use what the LLM tells them as if it were their own reasoning — that is a standard caveat to everything here. But in the spirit of this article, where we are enlisting AI as a helper just as we might enlist a trusted human, this can be quite helpful indeed.

Conclusion: One thing we should never do

I want to end this post by mentioning one thing, other than cheating, that we should absolutely not be doing with AI: We should not use AI to actually grade our students’ work.

It’s very tempting to say that generative AI offers us the opportunity to implement alternative grading at scale, by pulling in folders full of student work as PDFs and distributing feedback out to students that they can then work on. AI seems to offer a solution to one of the most vexing problems of alternative grading: how to do it for large classes.

But there are all kinds of reasons why this is a bad idea. One of the main reasons is privacy; I can’t think of a single LLM tool that’s FERPA compliant. (I could be wrong, so correct me in the comments.) But more generally, grading is supposed to happen in the context of a relationship between professors and students. Taking ourselves out of the feedback loop, is categorically against the spirit of alternative grading and just not a good move generally speaking, and we need to avoid using tech of any sort to do it.

If you’re an AI user and have other helpful use cases to share, drop them in the comments!

The closest I’ve come, is that I have 100% disinvested from using Perplexity or the Comet browser, after Perplexity ran a viral marketing campaign aimed at students explicitly touting Comet’s usefulness in cheating assignments.

This and all other examples here used Claude Sonnet 4.6.

An even more fun fact about this example: While preparing this blog post, I tried to go back and find the full list of 68 learning objectives. Sadly, somehow I had uploaded that list to our LMS at the time and then apparently deleted the original; and the course had been purged from the LMS. So it appeared that the list of all 68 objectives had been lost to history. But I realized I could use AI to help here as well: I downloaded an archive folder that contained all of my course documents, assessments, activities for that class, and then set Claude Cowork to work inside this folder to reconstruct my list of learning objectives, either by finding explicit references to them on assessments or other documents, or by inferring what they might have been from the syllabus and other course materials. Claude was able to find explicit statements of 56 of the 68 learning objectives, and then by cross-referencing my materials with a table of contents for our textbook (which was not included in the archive!) was able to make plausible inferences about the other 12. So like an archaeologist, Claude was able to reconstruct my original list of 68, which you can now see in all its glory at this link.

Note that it even uses the “I can…” formulation for the Learning Targets, which I did not instruct Claude to do. Maybe it knows me by this point?

An exemplar of an exemplar!

I shudder to think how many hours I spent in high school, sequestered in my room with the Herbert Clarke trumpet method book series, first published in 1909 and has remained the standard for beginning-to-advanced trumpet players ever since.

If you want to check it out yourself, I have made the HTML file available at this link. You would need to download it, then open it in a browser.

“Over-sharing” by websites supposedly dedicated to helping students has been around a lot longer than LLMs.

Really glad you wrote this post, Robert. One way I've used AI (Claude, specifically) is for Just in Time Teaching. My students consume content before class by reading or watching videos and they respond to a series of open-ended questions on the material they consumed. Just before class, I download their responses and feed them to Claude, asking the AI to summarize major themes. This helps me tailor what happens in class based on their collective strengths and weaknesses. This use of AI walks the line between providing constructive feedback and grading -- I'm not assigning grades based on the AI assessment (students get credit for completion, not correctness); rather, I'm using the AI's ability to quickly summarize and categorize 40 student responses so I can provide customized instruction that is tailored to this particular group of students.

I offered my Real Analysis students https://hallmos.com which is an NSF funded project that helps with student use case 2. They seemed to like it and then, when I asked if they had been using it, they had forgotten all about it and preferred in-class feedback.