Into the ungrading-verse

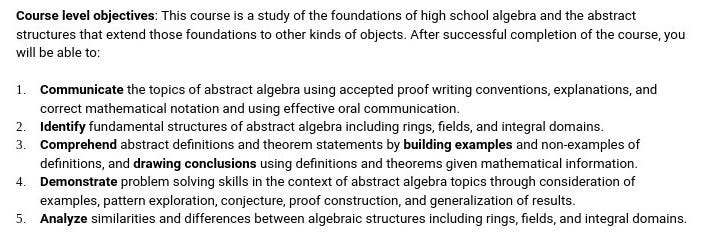

Upgrading to ungrading in an upper-level math course

Classes at our university start… today! This semester I’m teaching two semesters of Modern Algebra 1, an upper-level mathematics course focusing on number theory, rings, and fields. I’m an algebraic topologist by training, much heavier on the algebra than on the topology, so this is a subject close to my heart and a class I’ve taught close to a dozen times over my career. And I’ve never been fully happy with the way I’ve taught it. So this semester, I am shaking things up in a significant way: I’m going all-in with ungrading, and teaching the course using inquiry-based learning.

David and I have been learning about ungrading and following the work of the ungrading community, and David just finished teaching a course that was fully ungraded. At the end of Winter 2021 semester1, I had a sense that if I ever wanted to get my Modern Algebra class to where I wanted it, I needed to explore ungrading. Emboldened by what I’ve learned and by David’s success — and victimized by my own lack of impulse control when it comes to trying new things in the classroom — I’m doing just that, starting now.

Start with why

I’ve taught this course (or a variant of it) roughly once every two years throughout my career, including this time last year. Following roughly the same trajectory as all my teaching over time, my course design has evolved to being a flipped course using specifications grading. Here is the syllabus from last year’s version. It hits all the notes: clear and measurable learning objectives, activities that align with the objectives, assessments that accurately measure attainment of the learning objectives, and a grading system that clearly connects that attainment to a course grade.

It was all very well built, in my opinion. And yet, like so many other versions of the course I’d taught in the past, it left me somehow deeply unsatisfied. By all accounts, students succeeded and learned a lot. But there were signs that something wasn’t right.

The biggest red flag came in the form of repeated semantic errors in student work, from students who were otherwise nailing the learning objectives and eventually writing proofs that met the specifications. A semantic error is where you say or write something that has correct grammar, but the statement makes no sense. Colorless green ideas sleep furiously is a famous example. So is: Since p is prime, it is also relatively prime.2 This and other nonsensical statements3 showed up, over and over, in student work throughout the semester.

Sure, students could revise their work to fix these. But why weren’t students learning to avoid them in the first place? Isn’t one of the main ideas of an upper-level college course, or any college course, to foster lifelong learning and to make learners independent of teachers?

Two things dawned on me. First, my students were not getting nearly enough time in class actually doing important mathematics. The workflow of 30-45 minutes of daily prep followed by several 20-minute in-class activities that were debriefed by me, was not working — it always ended up being me doing the math. Second, student progress was being measured by learning objectives, which measured the lesson-level details pretty well but missed the big picture. Although having clear and measurable learning objectives is important for a number of reasons, in a class like Modern Algebra, I need to see the totality of student understanding of the subject, and this goes beyond learning objectives — the same way a full understanding of the city you live in goes way beyond knowing how to give directions from one place to another.

Replacing the foundation

I started drafting notes for Winter 2022 over the summer of 2021. Three ideas rose to the top:

While I value clear and measurable learning objectives at the course and lesson/module level, I place greater value on being able to explain complex ideas in simple language to a variety of audiences — including oneself. A special case is developing a personal “B.S. meter” that goes off when you write down a semantically nonsensical statement and are tempted to believe it’s true. This skill and its development is the primary course-level objective.

The clearest signal I can get that a student is progressing toward this objective, is having them present their work to each other and to me, and participate in a defense of that work. Using only written work to evaluate student learning — as I’d done in every instance of the course in the past — is not sufficient. Writing introduces noise to that signal, namely the student’s command of written expression.

Charting and enabling student growth in this main objective and in all the smaller ones, means I have to look at the totality of student understanding that emerges from a synthesis of all the work they do. This includes writing, but not writing alone: Writing and oral presentation and asking questions and giving help in others’ presentations and engagement with a group and… There’s a lot. And real student mastery of the subject only becomes evident when you look at all these components as a whole.

About a month ago, I started translating these big ideas into concrete course structures.

First, as always, I wrote out clear and measurable learning objectives at the course and lesson/module level. Just like always — but also not like always, since I was now doing this with the idea that the learning objectives are just tools for determining that “totality of understanding” that I wanted. They are the ingredients, not the meal.

These are pretty generic and partially dictated by my department’s syllabus of record. But, it’s enough to work with. After that, there’s a long and boring list of specific topic objectives in the syllabus.

Once the objectives are written, we work backwards and design activities that students will do to drive them towards the learning objectives and then assessments that measure their attainment of those objectives. Here’s where the new approach seriously diverges from the old ones.

Students will be doing two main kinds of activities in the course. First, prior to class, they work on Daily Prep activities where they read sections of our textbook, work out definitions of constructs (Objective 3 above) and, critically, work out complete rough drafts of solutions to problems (which in this class are almost always proofs). Then, in class, the focus is on student presentations of their Daily Prep work to the rest of the class and using these as raw material for group work and discussion. There are other, less central activities as well, but these two are the main events.

Before I go farther, I need to come clear that I basically copied every aspect of David’s Euclidean Geometry class from last semester in the build of this course. All with his permission of course, and with a few changes. Anything that goes right this semester should be credited to him and my students; anything that falls apart is likely my fault.

Assessment and (un)grading

As I mentioned, the key difference in my approach this time is not to hyper-focus on learning objectives, but use them as ingredients to look at the totality of student understanding.

What I’ve realized is that for this kind of class at least, learning resists discretization. There’s no way to cleanly put student learning into buckets of learning objectives or specifications, and say that if you satisfy X number of learning objectives at Y level of competence, there is a table in the syllabus that will point you to the right grade in the course. In some courses, you can discretize; Calculus, with its emphasis on specific skills like finding derivatives and solving optimization problems, is an example where this usually works. But in a big-picture class like Modern Algebra, again it’s all about the totality of student understanding.

So that gets us to ungrading. Here’s how I have planned this to work (again mostly forked from David’s geometry class setup).

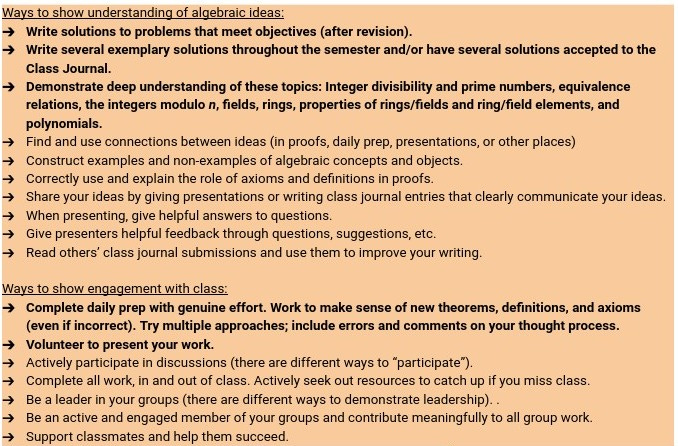

The main goal for students is to build a body of work through the course that demonstrates understanding of the mathematical topics as well as engagement with the course. In the syllabus, I have a list of things students can do, to structure that body of work:

The boldface items are considered essential components of this body of work; students really have to do these on a consistent basis to be given credit for the course. But this list is not exhaustive; students can suggest alternatives or replacements.

The body of work is built throughout the semester by student activities and assessments. Daily Prep is one kind of assessment; Homework is another, and this is a weekly assignment where a small number of important Daily Prep problems are formally written up following class discussion. Homework problems will be given feedback but no grades.

Well, sort of no grades. Certainly no points; but I will evaluate student homework submissions using a chart pretty similar to the old EMRF/EMRN rubric I use elsewhere:

There is a separate document that has the full specs for acceptable and exemplary homework solutions, and another document with an example of each category of work you see in the chart.

I am going to set up the LMS at the beginning of the semester so that each homework assignment receives a “grade” of either 0 or 1, with 1 meaning “You turned it in properly and there’s some feedback waiting for you”. The four verbal labels above will not be displayed because I am fully aware of Goodhart’s Law and what Butler and Nisan said about providing grades or grade-like summaries of student work. But those labels will be the focal point of my feedback (e.g., “This work doesn’t meet the standards for homework because […] but it definitely demonstrates partial understanding and useful progress, so make sure to submit a revision.”)

Based on talking with David about his experiences in geometry, I suspect I will be tempted to change this up and start having the LMS put the labels on the work in the gradebook. I’m suspicious of this, but if it helps students then I’ll do it.

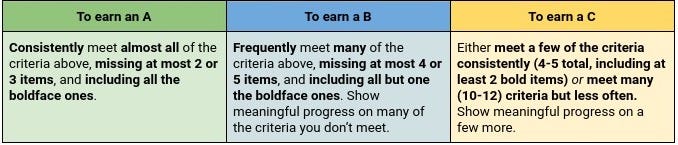

Students build a body of work through Daily Prep, Homework, presenting, discussing, being good citizens and leaders in their group, and more through the semester. I assign grades as follows:

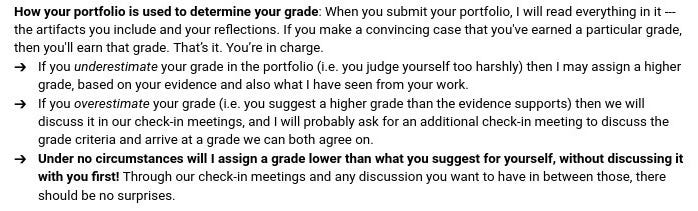

How students do this, is through their Portfolio. This is just a collection of their best work (that the students assemble) along with some short essays on their work in the class, and importantly, an essay where they state what grade they think they have earned in the class based on the evidence they present in their portfolio. I will have two mid-semester check-in meetings with each student to discuss their progress, then I read their portfolios at the end. If there’s sufficient evidence to support their request for a grade, that’s the grade they’ll get.

As the syllabus states: That’s it. That’s the grading system. You’re in charge.

Last semester, in my Discrete Structures for Computer Science course, at the end of the semester I beta-tested this idea of “tell me what grade you think you earned and then explain why”. The course was done with specs grading, so this was just a hypothetical exercise. But, in one section, all but 1-2 students gave themselves the same grade that they earned with the specs grading system. In the other section, several students vastly overestimated their grades — students asking for a B with no homework ever turned in for example. But of the students who provided concrete evidence of learning and growth, almost all of their self-assigned grades were in agreement with what my specs grading system assigned them.

In the case of disagreements about grades, I have this policy this semester:

So in case of disagreements, we talk it out, and together we aim for the solution that benefits the student the most while still being justifiable by facts. This strikes me as being faithful to real-world performance evaluation practices — and communication between grown-ups in general.

Unknown unknowns

I won’t lie: I am nervous about all this.

I have only ever given up control of a class to students at this scale once before: About 15 years ago, at my previous institution, where I had just gotten tenure and decided to use it to teach Modern Algebra using a setup very similar to this. It was an unmitigated disaster. Students came to class having done precisely nothing to prepare; my call for volunteers to present work on the first presentation day was met with silence. Same for the next day, and the day after that. Students began telling me that I should “just teach the material”. I dug in my heels and refused. Students did the same. In many ways, it was the beginning of the end of my stay at that college. I swore that I would never again attempt this approach, regardless of my rank or tenure. And yet, here I am.

There are a lot of “what ifs” here, and I’ve written out contingency plans for the ones I can identify. It’s the contingencies I can’t identify, the unknown unknowns, that occupy my mind. For these — and for those of you who are in similar situations — I will lean on a few basic principles:

Students need to be active agents in constructing their understanding of concepts. Anything good-faith effort in that direction is an objective good.

Students need also to be active agents in evaluating their own work, and ultimately being the main driver of their grades insofar as we must have grades in the first place.

Start with respecting and trusting students.

Continue by engaging in regular, honest, and two-way communication with students and then acting in good faith on what they tell you.

But don’t do anything out of a desire for retweets or page views, or a belief that you somehow know better than your students.

I’m not sure how well this is all going to go, but I at least feel like I’m doing it for the right reasons. If I stick to those reasons, I might mess things up in the short term but I won’t go too far wrong.

“Winter” semester is my university’s Michigan-climatically-appropriate name for “Spring” semester.

“Relatively prime” is a term that describes pairs of numbers. For example, the integers 10 and 27 are relatively prime. Using it to describe a single number doesn’t make sense.

I do not mean the term “nonsensical” in a derogatory way, and I am not trying to shame or insult students. I mean it literally: A nonsensical statement is one that may have correct grammar but which does not make sense — does not resolve into semantically meaningful information.

I really appreciate the open sharing you do about your grading and experimentation. I've incorporated your EMRN scale with my American Sign Languages courses along with other components of specifications grading (including retakes without penalty). It has transformed my relationship with students and grading.

I’m interested to hear how it goes! Perhaps something like this can be used in lower level classes like calculus too. I really like the shift in focus to more wholistic. I’m just wondering about your workload during each week. How much time are you spending giving feedback and meeting with students to discuss their progress? I cannot think of a way to scale this up to 36 students per section.