Improving the feedback given to students

Four big problems about feedback from education research

Today’s guest post is from Prof. Sarah Hanusch, an associate professor of mathematics at SUNY Oswego, a regional comprehensive university in Central New York. She holds a Ph.D. in mathematics education from Texas State University, and researches the instructional practices of collegiate mathematics instructors, especially in proof intensive courses. She is also an advocate for active learning pedagogy and is an officer of the Greater Upstate New York Inquiry Based Learning Consortium. Outside of her job, Sarah spends her time teaching group exercise classes, cooking and baking, and spending time with her husband and two sons.

Feedback is one of the cornerstones of alternative grading systems, as we want our students to learn from feedback and apply it to other assignments, assessments or revisions. However, not all feedback is created equally, and the research my collaborators and I have conducted has shown that many students struggle to understand the feedback that we give them. In particular, we have discovered four big problems with the feedback we leave on students’ papers: (1) students don’t use it, (2) students don’t understand it, (3) students can’t apply it, and (4) we may not give it equitably.

Two quick disclaimers on the research findings that I will discuss in this blog post. These studies concerned feedback on student-produced mathematical proofs, and much of the data came from clinical interviews that were not connected to a specific course. Although this specific research focused exclusively on student proofs, many of the findings could apply to other areas of mathematics and other subject matters.

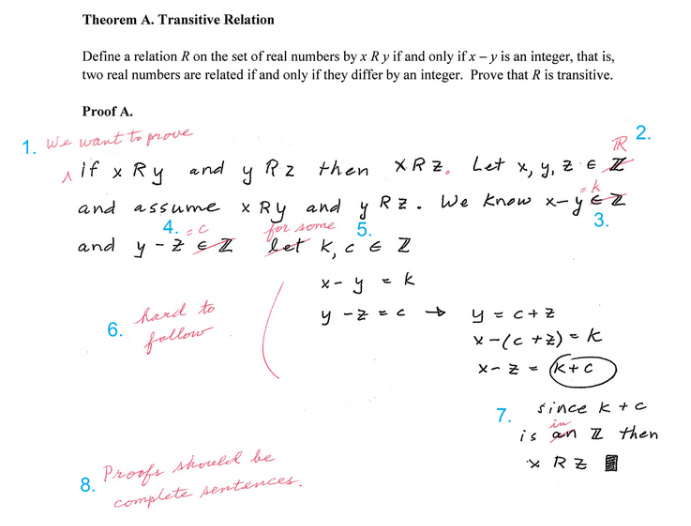

A mathematical proof is a logical deductive argument that shows a mathematical relationship (a.k.a. the theorem) is always true, and it’s the structure mathematicians use for disseminating new mathematics. Published proofs can be several pages long, but the types of proofs we ask undergraduate students to write are usually 1 or 2 paragraphs. The figure below shows an example that we used in one of our interviews with the student-produced proof in black and faculty feedback in red. The blue numbers were added by the research team after the completion of the interviews. Do not be alarmed if you don’t understand the proof in the example, I don’t expect you to! But, I will refer to some of the feedback provided in this example, and why it wasn’t so useful to the students we interviewed.

The second disclaimer is that the examples of feedback that we collected came from traditionally graded courses with feedback provided on physical paper or from clinical interviews separate from a classroom environment. This means that none of the faculty members that wrote the feedback intended the students to use the feedback to revise and resubmit that exact assignment. We suspect that feedback for revision might be written differently than feedback to justify a grade, although that has not been studied in this context.

Problem 1: Students don’t use the feedback

Even students who highly value feedback will not always read it carefully. Why? One reason is because they don’t have the time to do so. Often the students are only given a minute or two to look at physically returned papers, before we start new content, which translates to only looking at the grade and then putting it away. Once it’s put away, most students do not pull it back out unless there is a reason to. Some students also said they did not look at the feedback because of negative emotions, like dread or disappointment.

In the interviews we conducted, many students reported that they would read the feedback and make “mental notes” about their proofs when they prepared for their exams. Most of the students said that they would not revise their homework proofs unless they were explicitly asked to or if they thought that exact proof would be on their exam. However, all of the students we interviewed claimed that they would use the feedback if they were instructed to revise and resubmit the proof.

Most alternative grading schemes are designed to incentivize students to use their feedback, but there are still things we can do to facilitate this. We can give the students time during class to read their feedback, even if that feedback was provided electronically. This ensures that physical papers are not just immediately stuck into a folder to never see light again, and it gives the students a chance to question the feedback.

Problem 2: Students don’t understand the feedback

Students also often do not understand the feedback that we offer to them. Students are less familiar with the concepts, definitions and proof structures than experts. For example, I once had a student show me approximately half a dozen quizzes where she attempted to prove a theorem about a greatest common divisor (gcd). On each of the proof attempts, I had written “you only proved half the definition”. Our course notes wrote the gcd definition with two statements (a) d divides a and b, and (b) if c divides a and b, then c divides d. In each proof attempt this student proved statement (a), but then stopped without any reference to (b). I felt that telling the student she only proved half the definition would prompt her to look up the definition, but my student did not understand that and had no idea what I meant by only proving half the definition. I realized at that moment that I had failed to give her feedback that she could use at her current level of understanding.

Students often look at different aspects of proofs than experts, focusing more on the form of proofs than on potential logical issues. For example, when asked to explain why “we want to prove” was added to the first line of the example proof, the research team interpreted this feedback as necessary for avoiding a logical fallacy1. In contrast, most of the students ignored the logical problem and instead focused only on the form, saying something like “that’s just one of the proper ways to start a proof.”

Similarly, the feedback where the faculty member crossed out “let” and wrote the phrase “for some” was a missed learning opportunity for the students. There is a subtle logic issue that underlies the need for the alternate phrasing2. But five of the eight students we interviewed were not able to explain the logic.

One important note is that the students can revise a proof without understanding why. Every student in our study revised the example proof above to include the phrase “we want to prove” at the beginning of the proof and wrote “for some” instead of let, regardless of whether they could explain why that was needed. In other words, they could make the changes without understanding how to not make the same error again in the future.

Another study (Spiro, et al.) showed that the four mathematics faculty whose grading was collected over an entire semester used checkmarks with no other feedback more than 50% of the time, and that direct edits without explanation, where the professor inserts wording directly into the writing, were the second most common feedback type. Checkmarks only convey that something was correct, but it does not specify what aspect was correct (the logic? the writing? the notation?). Also, many times it was impossible to tell which line of writing the checkmark was referring to. Direct edits only convey what needs to be changed, but not why that change is needed or how it applies to similar tasks. These are the least useful feedback types for the growth of students’ conceptual understanding.

We can help students better understand feedback in several ways. First, we can provide more explanations. Students need to know why something is correct or incorrect even more than they need to be told what the correct form is. They also need feedback that tells them what aspects of their work the professor focused on. Was it their logical structure? The theorems and definitions they cited? The quality of the writing? Was their proof perfect? Or just adequate? This will help the students better situate their personal understanding of the material and their progress in the course.

Rubrics can be a useful tool for conveying to students what you were looking at in their proof. The RVF rubric for grading proofs assesses the readability, validity and fluency of a proof, and provides a separate score for each of the criteria. Readability refers to the use of language, punctuation and organization. Validity refers to the logical structure of the proof. Fluency refers to the integration of notation, the use of precise mathematical vocabulary, and the overall conciseness of the proof. When Bob Moore interviewed faculty about their grading practices on proofs, he found that most faculty members looked at those three criteria when they assess proofs, but also looked for evidence of understanding of the mathematical concepts and definitions involved. In other words, they are looking for evidence of student learning. These four criteria (readability, validity, fluency and understanding) do have significant overlaps but each highlights a different essential component.

Problem 3: Students can’t apply the feedback

The third problem is that students can’t apply the feedback they get to new tasks. We tend to only provide students “feed-back”, but they also need “feed-forward” if they are to actually apply the feedback to different proof attempts. Feed-forward is defined as answering the question “What activities need to be undertaken to make better progress?” Many students need to be told specifically how their feedback applies to new tasks, and what they need to do on those new tasks. Notice that of the 8 items of feedback on the example proof, six of the items have no indication about applying the feedback to another proof writing task. The two items that possibly could be interpreted as “feed-forward” are inserting “we want to prove” and saying “proofs should be complete sentences”. But, even those feedback items only vaguely indicated which future tasks the feedback might apply to. None of the comments included instructions about how to use the feedback to improve learning.

Sadler summarized this problem:

Students should be trained in how to interpret feedback, how to make connections between the feedback and the characteristics of the work they produce, and how they can improve their work in the future. It cannot simply be assumed that when students are ‘given feedback’ they know what to do with it (p. 78)

It’s not enough to give students direct edit feedback if we want them to apply the feedback to a new task. They need to be told (or given a chance to reflect on) how the feedback might relate to a different task, and as discussed in the previous section that often means they need an explanation about why something is correct or incorrect. They also need direction on what actions they should complete as a result of the feedback.

In the example proof, feedback #2 directed the student to replace the set of integers (Z) with the set of real numbers (R). What would be more helpful as feedback would be to say, “This relation is defined on the real numbers. On your next proof of the transitive property, make sure that you choose the correct domain.” Similarly, adding to feedback 1, saying “Remember, in this class, we begin our proofs by stating our goal, which must include the phrase we want to prove.” Both of these feedback revisions point the student to what to do on a future task, and which tasks on which it would apply. Including information specific to the student’s progress in the course could also be an example of feed-forward, such as “I recommend that you revise this proof, and then practice question 6 from the textbook before next week’s retake.”

Problem 4: We may not apply feedback equitably

There is evidence that faculty do not provide feedback and grades equally to our students. Two studies focusing on assessing student proofs and one assessing problem solving in physics revealed that faculty often have to judge whether a student has sufficient understanding of the concepts, when the evidence from the students is ambiguous. In these situations, we give some students the benefit of the doubt, but assess other students as having a misunderstanding.

In both of the studies on assessing proof writing, the faculty claimed they considered the students’ previous performance in the course when making these judgments. For example, the faculty interviewed by Miller, et al. claimed that “better” students could fill in gaps in an argument, and if a “weaker” student turned in a good proof they might be suspected of copying a proof. Ultimately this means we grade the student, not only the work itself.

However, these studies relied on clinical interviews situated outside of an actual classroom setting. This means they did not document how frequently a student’s grade is changed because they are or are not given the benefit of the doubt. Nor do we know if there are differences between who does and does not get the benefit of the doubt along racial, economic, gender or any other type of category. This is an area that clearly needs additional research.

Since this problem is still so under researched, there are not any concrete suggestions that I can give at this time. But, I feel like acknowledging the problem is the first step to solving it. We each need to be aware of our own biases when making these instructional and feedback decisions.

Summary and Call to Action

When we provide students with written feedback, here are some of the best practices:

Give the students time to review their feedback during class.

Give the students an incentive to learn from the feedback through revision or reattempts.

Tell the students why their work is correct or incorrect, not only what.

Even when the work is good, tell the students what components of the work you evaluated. Rubrics can help with this.

Students need more explicit information about how the feedback applies to similar tasks in their future.

Students need explicit directions on what they should do with the feedback to learn.

We need to be aware of our biases and the potential for our feedback to be swayed by those biases.

I know that giving explanations and more detailed feedback can increase the amount of time that we spend. I have not fully solved that problem. But some alternate techniques may help, such as using technology or recording feedback videos. Gradescope allows you to save feedback and copy it to other student work. When grading digitally, I often create a Word document with the feedback that I give, because it’s easier to copy and paste from that document instead of from within my LMS. Some faculty members feel that giving feedback via video is faster for them instead of writing feedback, particularly some of my colleagues who teach English writing. Alternatively, you could make one video for the whole class where you can give a mini lecture about why the errors you saw are errors. Similarly, providing oral feedback by talking with the students when you hand back the papers to address common mistakes (and why they are mistakes) is a valuable tool. Oral feedback to the whole class is also a useful way to convey what students should do with the feedback.

The student wrote the conclusion of this proof as the first sentence. Some faculty have students begin their proofs by stating “we want to prove” the conclusion, as a problem solving tool. However, if you do not include that introductory phrase, it appears like you are assuming the conclusion, which is a logical fallacy.

The logic issue here has to do with whether k (and c) can be any integer or a specific integer. When mathematicians say “let k be an integer,” logically this says that k could be any integer. But in this case, k is the specific integer that is the difference x-y. The phrasing “for some” means that k is one specific integer, which is what is needed in this case.

The figure referred to in the text (example marked-up proof with feedback) doesn’t seem to be present?

Question on the feedback suggestions - if you provide the “why” the solution is incorrect and direction in how to fix it, does that negate some of the learning if the student is directed to revise and resubmit?