A/B Testing Alternative Grading

Using data science to evaluate a new grading system

Today’s guest post comes to you from Erin Coffman, an assistant clinical professor of business analytics at the Kelley School of Business at Indiana University Indianapolis. At Kelley, she coordinates a required undergraduate analytics class (taken by around 500 students per year) and teaches a foundational analytics class in the MBA program. Erin holds a PhD and MA in economics from Georgia State University and an BA in mathematics education and economics from Anderson University (Indiana). Before joining IU in 2023, Erin spent a decade working as a data scientist at Airbnb, where she also developed and led a company-wide data and analytics training program called Data University. She lives in Indianapolis with her miniature schnauzer, Pretzel, and enjoys traveling, biking around town, golfing, and playing pub trivia. You can reach her at erincoff@iu.edu.

About IU-Indianapolis

IU Indianapolis is an R1 public university and Indiana University’s second largest campus, with around 20,000 undergraduate students and nearly 10,000 graduate students. Most students are traditional (i.e., they enroll directly after graduating high school), and many commute to the campus from the city and surrounding suburbs. Additionally, many are first generation and work significant hours (25+ weekly) while attending school full time. The Kelley School of Business is a top-ranked business school and has locations in both Bloomington and Indianapolis. In Indianapolis, Kelley enrolls about 1200 undergraduate students, and many lower-level courses (including the one discussed in this post) are open to pre-business students.

My First Attempt (The Problem)

I was hired at IU in 2023 to coordinate and teach a newly-required undergraduate course, K303: Technology and Business Analysis, which is the second in a series of technology- and analytics-focused courses in the business school. As the coordinator working with all K303 faculty, it is my job to create the schedule, choose course topics, make assessments, populate each section’s Canvas site, collect feedback across sections from students and faculty, and iterate on all of these things each semester.

As for the course itself, K303 is based on the steps of the analytics cycle: data collection, transformation, exploration, modeling, and visualization. We use Excel and Tableau as our primary tools. The course is required for students before enrolling in their Integrated Core semester, which is a key curricular piece of a Kelley education. Most students take K303 in their sophomore year; about half are already enrolled in the business school, while the rest are hoping to be accepted. The class size averages between 50-65 students per section, and we hold between 9-10 sections per year.

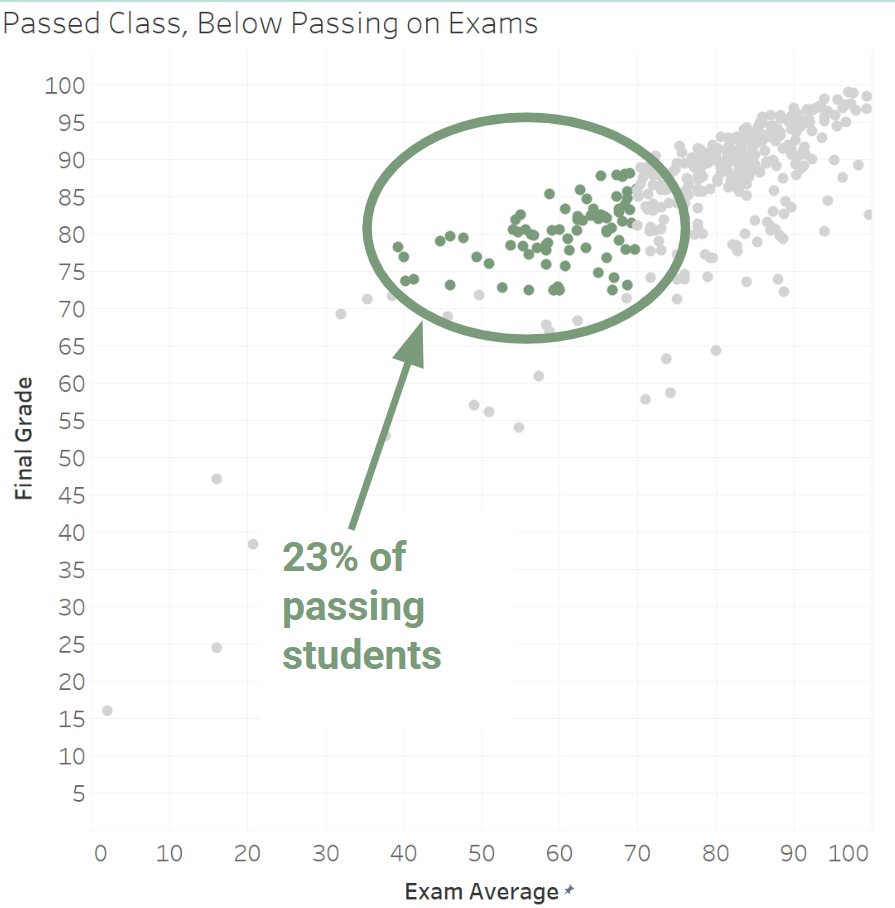

In my first year, I set up K303 with a typical grading structure: 1000 points available, 3 exams, a relatively strict attendance policy, etc. The results weren’t terrible, but student feedback consistently mentioned feeling overwhelmed by the pace and high anxiety regarding exams, and I saw a clear mismatch between grades and student understanding. The visualization below shows data from the first year: nearly a quarter of the students passed the class, but averaged a below-passing grade on exams.

While I am not fully sure why this result occurred, my theory is that students invested in making sure their homework assignments were perfect or near-perfect (many auto-graded assignments allowed unlimited retries, so it was relatively easy to “game” the results) to act as an insurance policy against low test scores. Because homework and attendance made up 60% of the grade, it was possible for students to do relatively poorly on the exams and still pass the class. The result shown above seemed like a big problem: I care about what my students actually know a lot more than the course’s grade distribution.

Standards-Based Grading (A Solution?)

Also in my first year at IU, I was assigned a summer section of K303. I was worried about having two very different audiences: one being students who had failed and had to retake the course, and the other being high-achiever types looking to get ahead. So, I visited our very helpful Center for Teaching and Learning (CTL) to get some ideas. They suggested specifications and/or standards-based grading as a way to serve both populations, and I was immediately obsessed. I restructured the course with the help of many resources, but mostly by using posts from this very Grading for Growth blog.

A few of the major changes I implemented:

No more points!

The course was pared down to 13 skills assessed by “Checkpoints”, one for each skill. These Checkpoints replaced traditional exams, and multiple variations of each Checkpoint were created and offered across a set of “Checkpoint Days”. Seven skills were identified as “Core” - all students had to pass these in order to earn a passing grade for the course.

Larger homework assignments (called Mini-Projects) and an optional group project were developed and these assignments offered retries without penalty.

Attendance, discussion posts, pre- and in-class practices (lightweight assignments) were bundled into “Engagement Credits”.

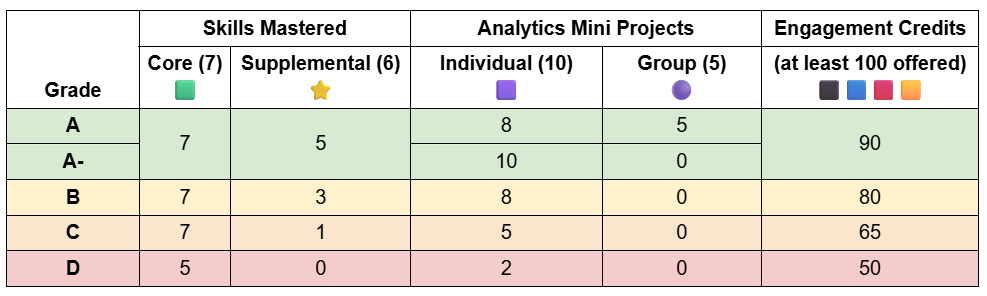

A grade table (example shown below) was used to communicate to students what had to be successfully completed to earn each grade. The numbers in the parentheses indicate the number offered for each category.

Testing the Results

The summer class was a great testing ground, as I had only 11 students. However, there were a lot of kinks to work out, specifically as it related to Canvas (IU’s learning management system). I knew I was going to continue with the new structure, but, given the numerous logistical issues to work out, I was hesitant to require the other faculty teaching K303 in the fall to implement the same.

The data scientist and economist in me saw this as an opportunity to employ an A/B test to identify the effects (if any) of the new grading system. At Airbnb, we tested everything, from website updates to how customer service agents were trained. A true A/B test is used in tech to isolate a change and test the effects, usually on a website (e.g., “If we change the checkout button to green from red, will more people click it?”). Web traffic is split and a portion of users see the treatment (green button) while the rest see the control (red button). Because everything else remained the same, the effect of changing the button color can be measured. (And of course, this is effectively just a tech rebranding of the scientific method!)

As we all know, it’s impossible to design a perfectly controlled experiment in education. However, some data is almost always better than no data. In order to best isolate the treatment (alternative grading) from the control (traditional grading), I did the following to set up the experiment:

I assigned two class sections (taught by me) as the Alternative Grading sections. (I initially wanted to teach one of each type to further isolate the effects, but the school has a policy against the same professor teaching sections of the same course differently.1)

I assigned two class sections (taught by two other faculty) as the Traditional Grading sections.

I updated the exams for the Traditional sections to map to Checkpoints in the Alternative sections. For example, the “Excel Functions” part of the traditional exam directly mirrored the “Excel Functions” Core Skill Checkpoint offered in the alternatively graded sections. (This allowed me to directly compare student performance on assessments for each skill across groups.)

I updated schedules for all sections to follow the same topics and cadence, including the same practices and homework assignments.

I designed a student sentiment survey to collect feedback at different points in the semester.

Did It Work?

The short answer is yes: alternative grading yielded higher student achievement, lower student anxiety, a higher pass rate, and a higher GPA average.

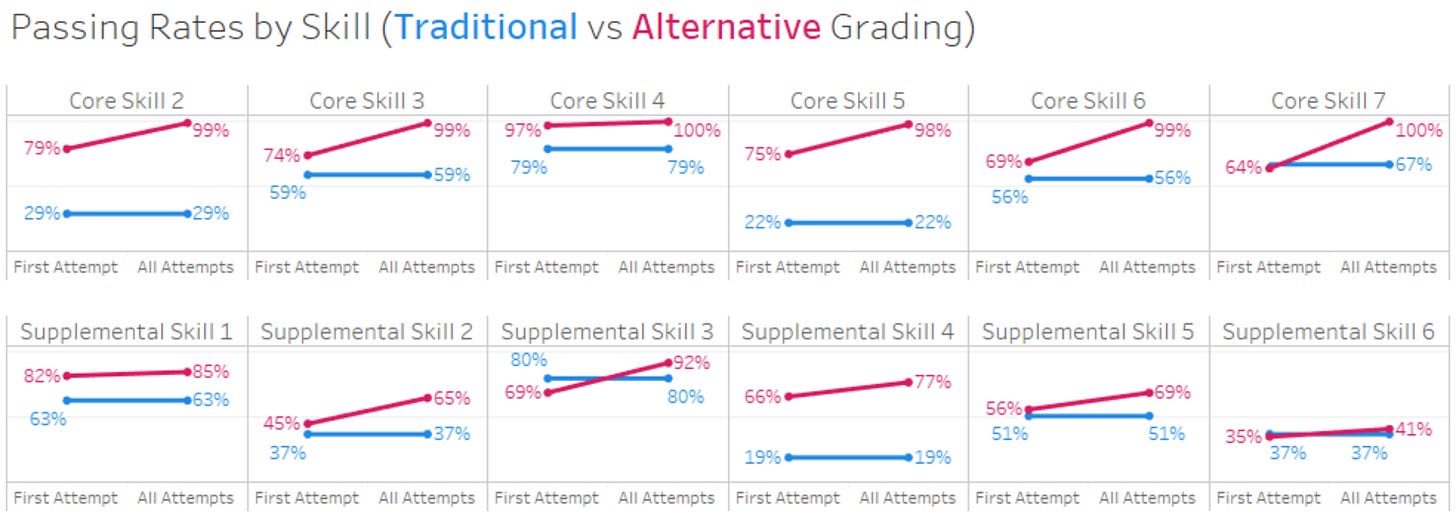

The visualization below shows the passing rates by first attempt and after all attempts. Note that in the traditionally graded sections, students only got one attempt (on a traditional exam) to pass each skill, which is why the “first” and “all attempts” pass rates are the same.2

A few key takeaways:

In every single Core Skill (those required to show proficiency in order to pass the class), alternatively graded students achieved higher (and near 100%) pass rates by the end of the semester.

In almost all skills, alternatively-graded students also achieved higher passing rates upon first attempt. Why is this? One theory is that because each skill was offered multiple times in the alternatively graded sections, students took it for the first time when they felt prepared. Students in the traditionally graded class had one shot: the day the exam was offered.

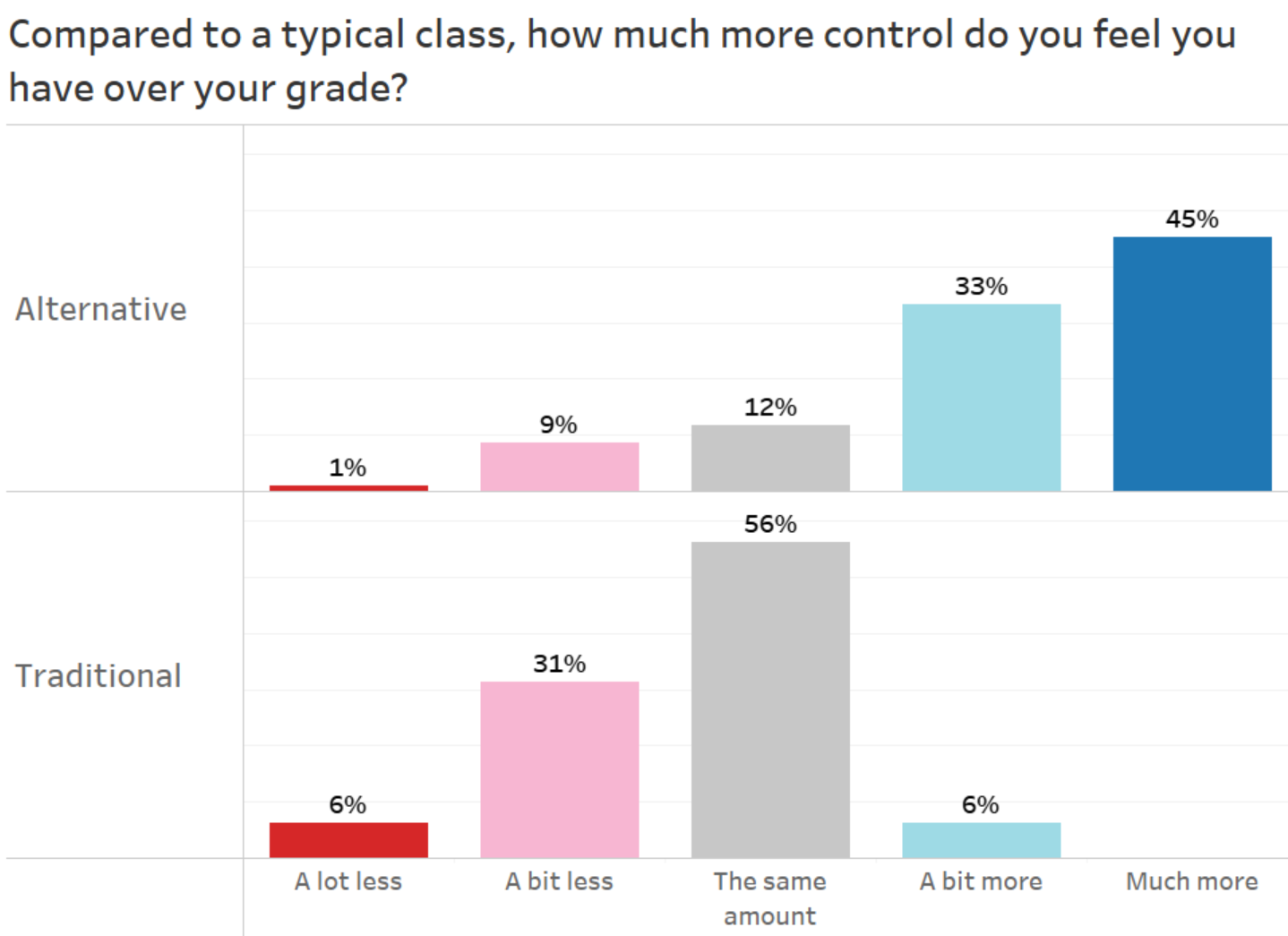

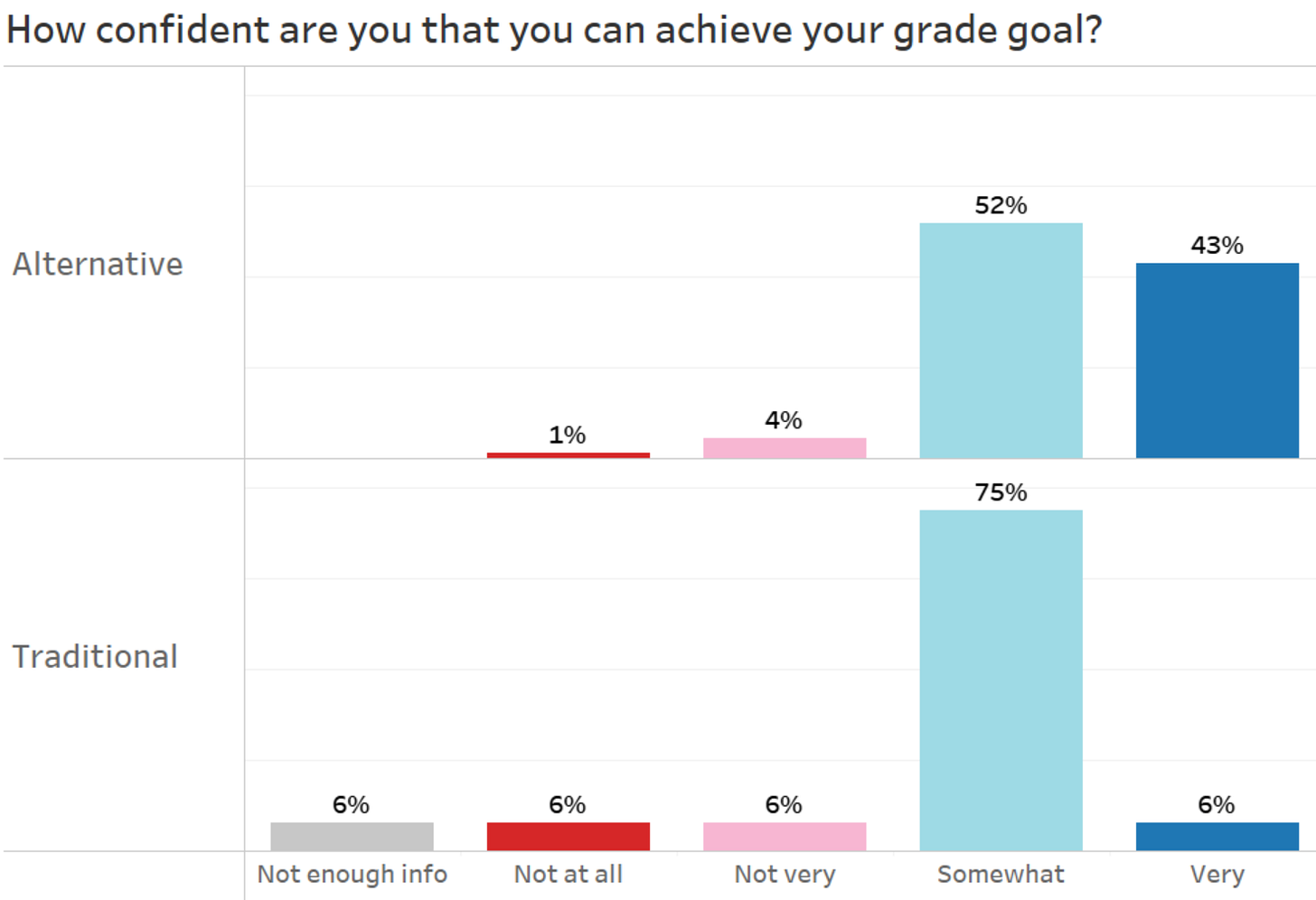

Another facet I wanted to investigate was student sentiment. We surveyed the students three times throughout the semester: at the beginning, mid-semester point, and a few weeks before the end. I’m showing the mid-semester results here.3

The chart below shows how students viewed control of their grade in K303:

As you can see above, students in the alternatively-graded sections of K303 felt more in control of their grade destiny compared to a typical class, as well as compared to students in the traditional grading sections.

To gauge any effect the grading system may have had on student confidence, we asked them to report confidence levels of achieving their grade goal for the course. While most students felt somewhat or very confident about achieving their goal, the share of students feeling very confident was much higher in the alternatively-graded sections.

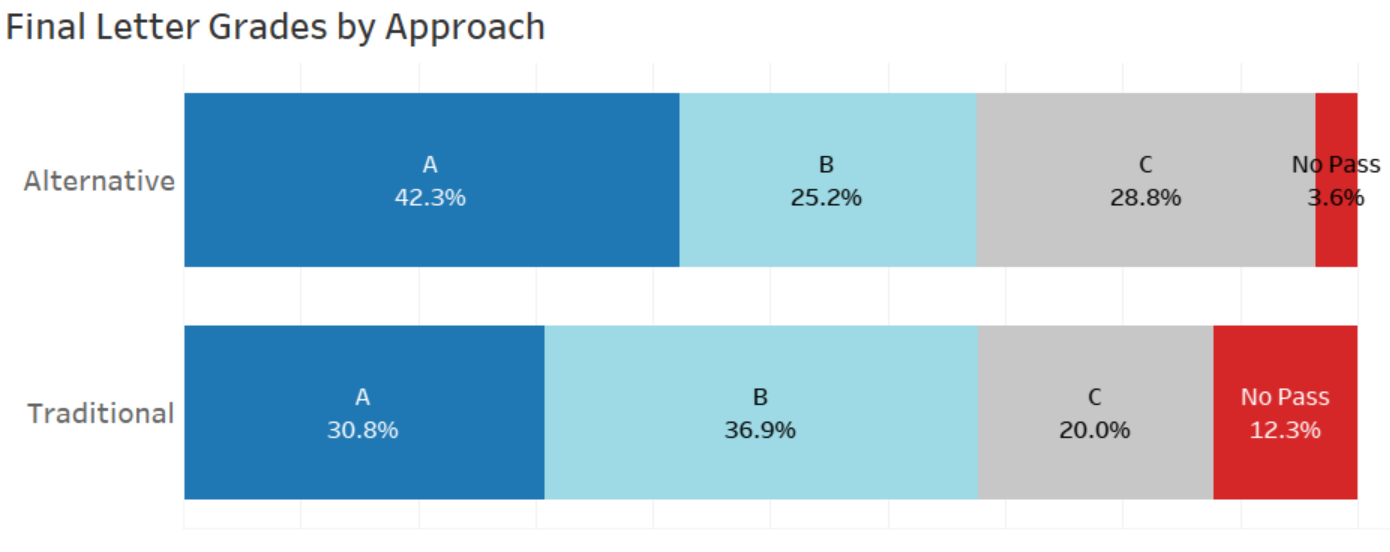

There was also a difference in final grades between the two groups. In the visualization below, the share of students earning an A or a B remained the same in each group, but many more students earned an A in the alternatively-graded sections. More importantly, the number of students failing the class (defined by the school as a grade of C- or lower) was significantly less than in the traditionally-graded sections.4

The Results Were Great! What’s the Catch?

If students were learning more, they felt more confident, and their grades were higher, we should definitely roll out alternative grading for all sections, right? It wasn’t quite that straightforward. The one outstanding issue was the time investment required for grading. With the alternative grading format I tested, there were more assignments to grade, both in number and frequency (although the time needed to grade each item was significantly shorter). I was hesitant to ask the faculty to take on that burden. After presenting the results above to our administration, they agreed to provide resources for a centralized grading program staffed by teaching assistants, and managed by me as the coordinator.

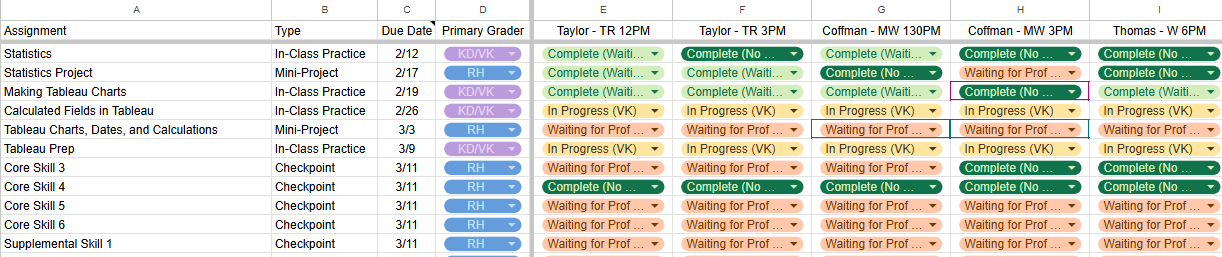

How does it work? Three undergraduates make up our shared grading team and a Google Sheets file is used for tracking that status of each assignment (see example below). A lead grader (who has been my TA since my first year) has trained and onboarded the others, and because she is graduating this year, she is training her replacement. Their resources include a repository of answer keys for all assignments, as well as links to video snippets (shareable with students) walking through almost every component of each major assignment and Checkpoint.

For lightweight practices where only effort (vs. accuracy) is required, the team assigns credit for completed work or marks the work incomplete if warranted (Canvas terminology only allows for “complete” and “incomplete” but these translate to “success” and “retry”). For Checkpoints and Mini-Projects, the graders do a first pass and mark all places where the work is incorrect (using the annotated comments feature in SpeedGrader), and add links to video snippets where necessary. If a student has a fully correct assignment, they get marked complete, and if they do not, the faculty then do a second pass and make the determination of whether the assignment met the bar for “success”. We as faculty also leave our own feedback and then update the due dates for those needing to retry. This means that no student is being asked to retry work without the faculty weighing in. Overall, the system has cut down on a lot of grading time, and allows us to focus on students who need a bit more direction.

Concluding Thoughts

Given the results above, all K303 faculty began teaching using the alternative grading method in the Spring of 2025. Students have generally rated the system favorably, and we continue to see a very high share of students achieving success across the learning objectives / skills. I have continued to make tweaks each semester, and am waiting for a day when Canvas allows adaptations for alternative grading schemes.

I’d like to thank Nolan Taylor and Michael Thomas (the faculty who taught the traditionally-graded sections for this study - and now teach K303 using alternative methods!) for their flexibility, willingness to collect data, and overall embracing of the adventure that is alternative grading.

To address the issue that differences in student outcomes could be attributed to differences in teaching styles between myself and other faculty, I compared overall student outcomes (using GPA) from a previous semester in which all sections were traditionally graded and found no significant difference.

Core Skill 1 requires a few components to pass (in addition to just a Checkpoint). This skill is more about navigating basic files, troubleshooting, etc. It wasn’t assessed on the exams in the traditional sections, so it is excluded from this chart.

Mid-semester gives enough time for reflection but also for changing course in the case of feeling off-track. End-of-semester results showed the same patterns, although response rates were lower.

The differences in GPA results are statistically significant at the .01 level. A Mann-Whitney U test (also called the Wilcoxon Rank Sum test) was used because of non-normality of the distribution and unequal sample sizes.

Wow! I love this data-driven approach. Consider publishing in a peer-reviewed journal -- we need more evidence like this in the literature showing the impact of alternative grading!

I do think a notable conclusion from your study is the increase in time necessary to implement the alternative grading system. As a community college adjunct, all of the planning, implementing, and grading tasks fall entirely on me, and I'm not the only instructor trying to implement alternative grading without an administration willing to support a centralized grading team. I would love to see you apply your data analytics approach to quantify the increase in faculty time for your SBG system compared with the traditional approach -- both the overall increase in time commitment (including writing extra assessments, scheduling extra attempts, and actual grading time) and the proportion of that extra time that is allocated to your grading team.

I think your study is really impactful and has the potential to showcase both the powerful impact on our students and the real resources necessary to implement such a grading system!

A very helpful post and congratulations on your pioneering work! I have been doing a bit of research on ways for innovators like you to work with Canvas. I was struck by your last line that you are "awaiting for a day when Canvas allows adaptations for alternative grading schemes". Perhaps the day is already here! Let me pass along something I came across this morning that may help you and others move forward. This was from Gemini. Obviously since I just learned of it this morning, I have not yet had first-hand experience with it, but it should give you hope that a solution for you and others using a standards based approach is at hand.

[The following was generated by Gemini in response to my query regarding a standards-based approach while using Canvas]

The "Bridge": Interfacing with Canvas/SMS

The technical hurdle of "talking to the main system" has been largely solved by a standard called LTI Advantage (Learning Tools Interoperability).

How it works: If a teacher builds a custom AI tool or uses a FILL-specific app, as long as it is LTI 1.3 Certified, it can "plug in" to Canvas or Schoology.

The Benefit: The student works in the flexible, custom AI environment, but the "Assignment and Grade Service" (part of LTI Advantage) automatically pushes the final mastery data back into the school’s official gradebook.

Agentic Sync: In 2026, we are seeing agents that can "read" the Canvas syllabus, "act" as the instructional layer for the student, and then "report" the evidence of learning back to the administration without the teacher having to manually enter data.